AUGMENTED REALITY IS A FUN AND MEMORABLE EXPERIENCE.

Augmented Reality is a technology that blends a computer-generated image on a user’s view of their real world, resulting in an amazing moment for players! The world saw this concept EXPLODE in 2016 with the release of Pokémon Go. This design gave players the feeling of catching real Pokémon found in their nearby communities and put augmented reality into the common vocabulary of many people.

At Graphite Lab we believe Augmented Reality can be used to better engage an audience and build a more memorable fantasy around any interactive experience by blending the real world with a virtual one.

We love partnering with content agencies! If you are interested in bringing augmented reality technologies to your work in order to serve your clients better, reach out! We’d love to chat!

Over 30 million gamers saw Augmented Reality features in Pokémon GO

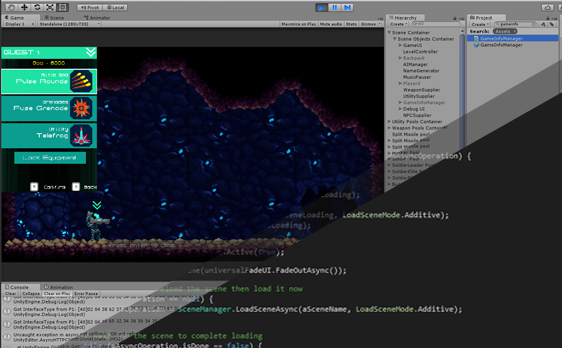

#madewithunity

Our team is comprised of programmers, artists and designers who are all experts in developing new creative experiences using Unity 3D as our engine of choice. Unity affords us a stable engine in which we bring together 3D models, artwork, animation and code to create amazing experiences for mobile devices and consoles.

We use Unity’s ARKit plugin to build apps for iOS 11, and gain easy access to ARKit core functionality, like motion tracking, plane finding, and light estimation. There’s also features for scaled content, plane visualization, occlusion and shadows, and much more.

We’ve also developed using Vuforia which allows AR experiences both iOS and Android. This allows us to develop for handheld and even headworn devices for iOS and Android and unlock new categories of apps that overlay digital content to physical objects.

“So, what the heck is this ARKit I keep hearing about?”

Apple joins the AR movement

With the release of iOS 11, Apple has introduced ARKit, which is a new framework that allows us to easily map and project some incredible augmented reality experiences for iPhone and iPad. By blending digital objects and information with the environment around you, ARKit allows us to reliably mix digital work we create with the real world, freeing users to interact with the world in entirely new ways.

With ARKit, we can analyze the scene presented by the camera view and find horizontal planes in the room. ARKit can quickly detect horizontal planes like tables and floors, and can track and place objects on smaller feature points as well. ARKit also makes use of the camera sensor to estimate the total amount of light available in a scene and applies the correct amount of lighting to virtual objects. This means we don’t have to rely on tacking objects for reliable placement (though there are still reasons you may want to use them!)

When you put iPhone X and ARKit together, they enable a revolutionary capability for robust face tracking. Using the TrueDepth camera, an app can detect the position, topology, and expression of the user’s face, all with high accuracy and in real time, making it easy to apply live selfie effects or use facial expressions to drive a 3D character. This is pretty incredible, considering the licensing costs for other solutions have been quite high.

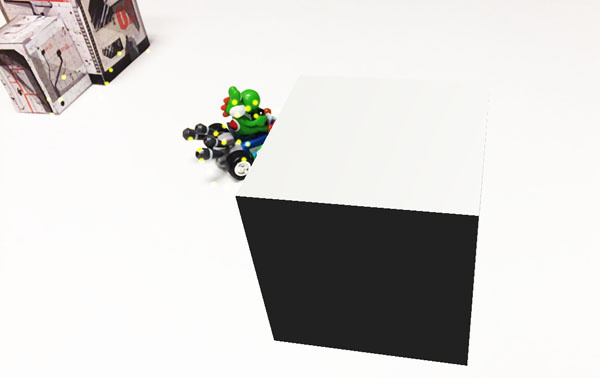

Yoshi is real and the cube is not. You can probably tell just by looking at this simple demo. What AR Kit does is process the table that Yoshi is sitting on, develops a 3D plane which we can then place objects on. So the result in this demo is a simple cube, but the sky’s the limit on what and how we can blend virtual and real-world objects.